Homebrew will be the easiest way for you to install the Astronomer CLI. In this post, I'll walk through the basics of Airflow and how to get started with Astronomer. You can even leverage Airflow for Feature Engineering, where you apply data transformations in your Data Warehouse, creating new views of data. A common use case for Airflow is taking data from one source, transforming it over several steps, and loading it into a data warehouse. Astronomer is a managed Airflow service that allows you to orchestrate workflows in a cloud environment. The power of writing a DAG with Python means that you can leverage the powerful suite of Python libraries available to do nearly anything you want. One of the biggest advantages to Airflow, and why it is so popular, is that you write your configuration in Python in the form of what is referred to as a DAG ( Directed Acyclic Graph). Apache Airflow is an open-source workflow management platform that helps you build Data Engineering Pipelines. That’s right, it’s an XComArg will be used to pull data from extract into transform_a and transform_b.We'll start here with Airflow. Let me show you an example: from _arg import XComArg How to use XComArgs?Īn XComArg represents an XCom push from a previous operator. Custom XCom Backends will have a dedicated tutorial. In this tutorial, we will see XComArgs and Decorators. Custom XCom Backends to use another storage than the Airflow metadatabase to store your XCOMs (data).Decorators that automatically create tasks for some Operators such as the PythonOperator, BranchPythonOperator, etc.XComArgs that allow task dependencies to be abstracted and inferred as a result of the Python function invocation (no worries, you are going to understand that in a minute).

The Airflow Taskflow API has three main components: In addition, the Taskflow API makes it easy to share data between tasks as you don’t need to use xcom_pull and xcom_push methods anymore. I’m not saying it becomes a pleasure to write DAGs, but almost. Therefore, you get a sort of natural flow to define tasks and dependencies. The Airflow Taskflow API makes it easier to author DAGs without extra code. Say welcome to the Airflow Taskflow API □ What is the Airflow Taskflow API? Therefore, if you want to make implicit dependencies explicit and write DAGs at ease while making them more readable… This example is simple, but imagine how hard it can be to debug your DAG if you have implicit dependencies with thousands of tasks.

A task needs data from another task, but you can’t see that on the dependencies. However, if you look at the dependencies, you see that:ĭon’t you see a problem here? Indeed, task c needs data from a and b, but it’s impossible to know that by looking at the dependencies. Task c needs data that tasks a and b return to print on the standard output. Look at the following DAG: from airflow import DAG Here is another use case of why the Taskflow API is incredible!

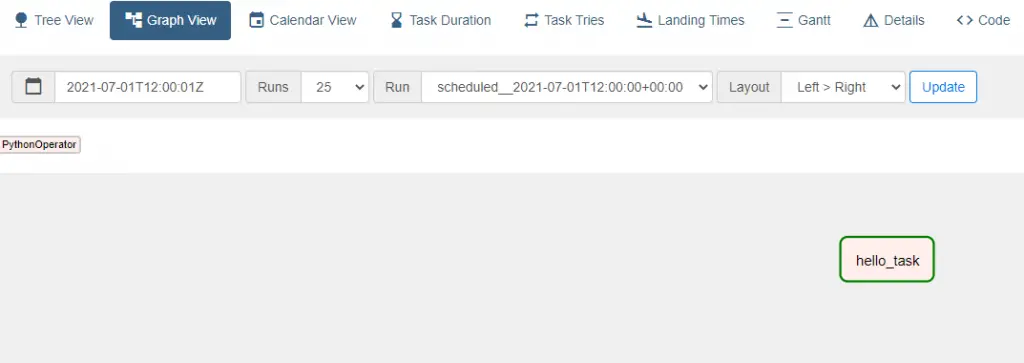

What if I tell you that you can get the same DAG with half less code making it more readable and faster to build? This way of writing DAGs was the only one for a long time, and I’m happy to tell you that this time is over. Those three tasks call three python functions _extract, _transform, and _load. You have a DAG with three tasks that share data using XCOMs. Your DAGs would be way more complex, but you get the idea. Print(ti.xcom_pull(task_ids='transform')) What does that mean? Well, I guess your DAGs usually look like that: from airflow import DAGįrom import PythonOperator The Airflow Taskflow API is not available before Apache Airflow 2.0.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed